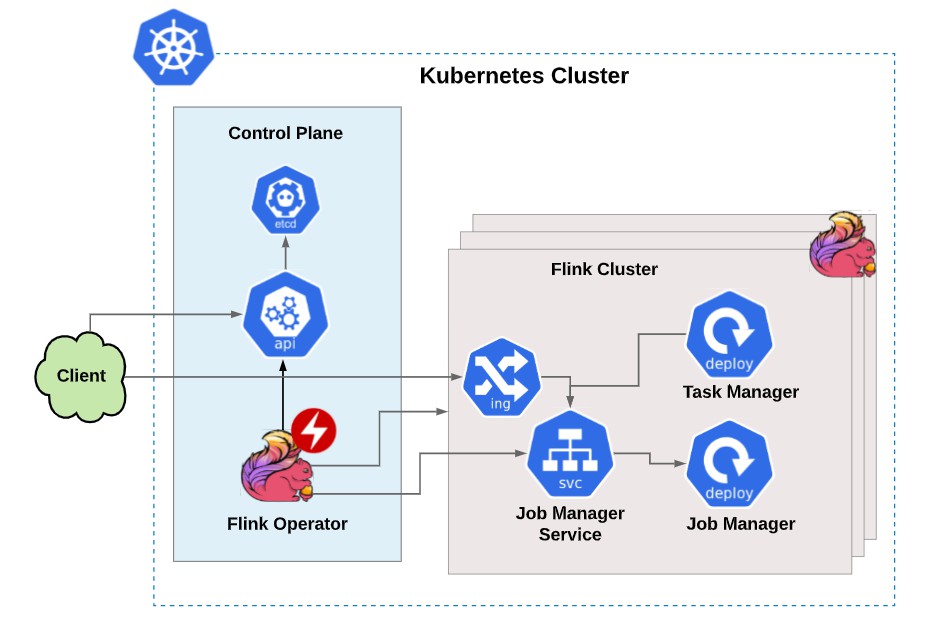

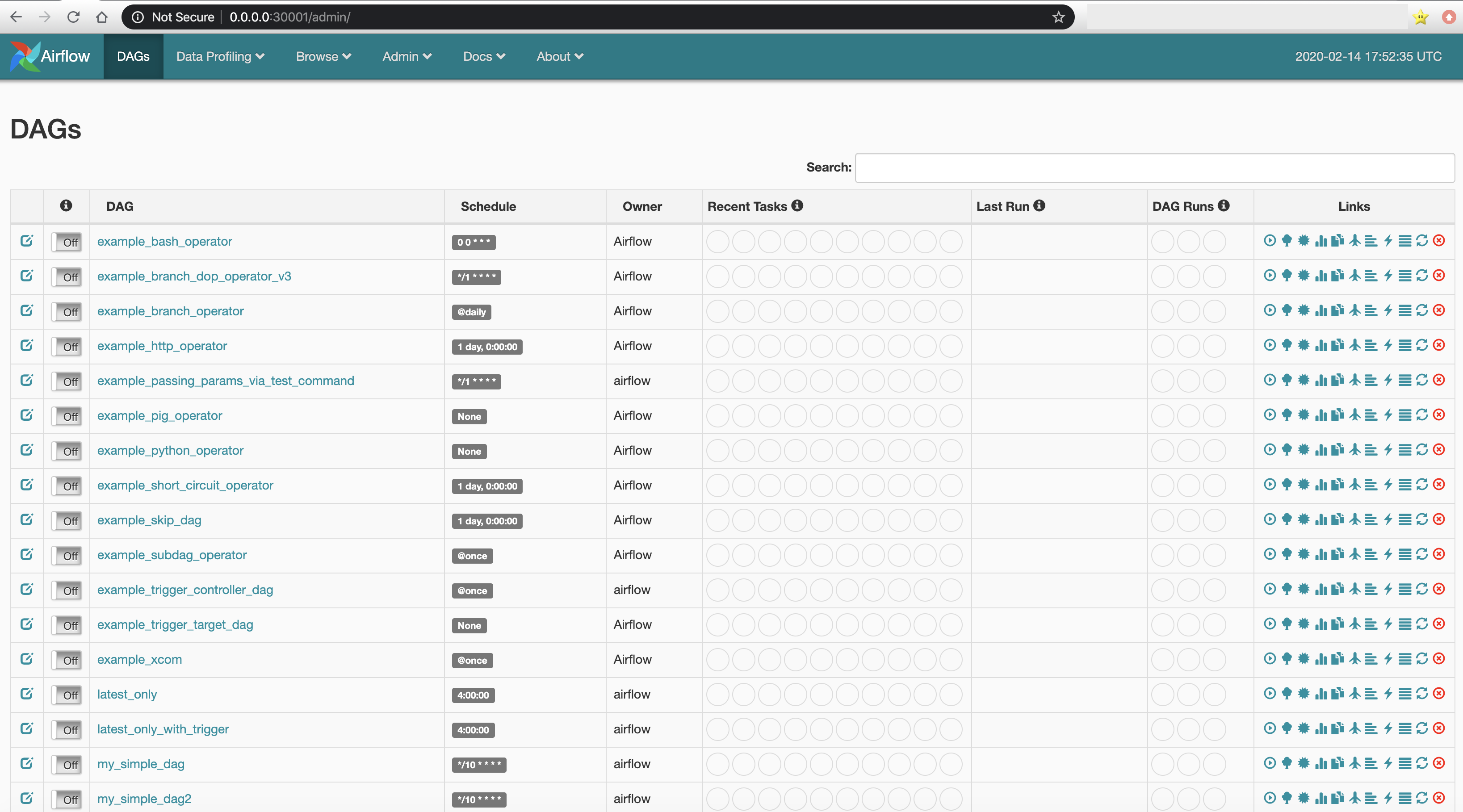

Change start_date to today's date, but keep it static default_args = with DAG ( ' cloudquery_sync ', default_args = default_args, schedule_interval = timedelta ( days = 1 ), ) as dag : cloudquery_operator = KubernetesPodOperator ( task_id = ' cloudquery_sync ', name = ' cloudquery-sync ', namespace = ' airflow ', image = ' ghcr. client import models as k8s # Change these to match your requirements. kubernetes_pod import KubernetesPodOperator from kubernetes. clustername The name of the Amazon EKS cluster to apply the AWS Fargate profile to.(templated) podexecutionrolearn The Amazon Resource Name (ARN) of the pod execution role to use for pods that match the selectors in the AWS Fargate profile. python_operator import PythonOperator from airflow. PLUS: Airflow Kubernetes executor is more efficiently scalable than celery even when we using KEDA for scaling celery. For example, to map a local directory at /data/airflow/dags (inside the Minikube container, if not running on bare metal), you can use the following configs and commands to create the Persistent Volume and Persistent Volume Claim:Ĭloudquery.py from datetime import datetime, timedelta from airflow import DAG from airflow. Then, when we need to use other operators, first we measure de resource consume and we know that is safe to use because the tasks use low resources and we can control tasks number on the same worker with parallelism limitation. When deployed to Kubernetes, this is done with Persistent Volumes (opens in a new tab) and Persistent Volume Claims. You will need to have the ability to set up DAGs. If you decide to proceed with Airflow, you can install it locally on Kubernetes using Minikube (opens in a new tab) and the Airflow Helm chart (opens in a new tab). If you don't, you should consider some simpler orchestration options to get started, such as GitHub Actions, Kestra, or even a simple cron-based deployment. The following DAG is probably the simplest example we could write to show how the Kubernetes Operator works. This guide assumes that you have a working Airflow installation and an available Kubernetes cluster, and experience with operating both of these. We will use the KubernetesOperator (opens in a new tab), which allows us to run tasks in Kubernetes pods. A simple sample on how to use Google Composer and Kubernetes Pod Operator. In this guide, we will show you how to get started with Airflow and CloudQuery. name ( str) name of the pod in which the task will run, will be used to generate a pod id. startuptimeoutseconds ( int) timeout in seconds to startup the pod. labels ( dict) labels to apply to the Pod. Includes ConfigMaps and PersistentVolumes. This option will require monitoring on the Composer resource usage. volumes ( .Volume) volumes for launched pod. As the KubernetesPodOperator will automatically spin up the pods to run the docker image, we do not need to manage the infrastructure. It can be used to schedule CloudQuery syncs, optionally retry them and send notifications when syncs fail. Since the dbt models are docker containerised, dependency conflict issue will not be a problem. Apache Airflow Orchestrating CloudQuery Syncs with Apache Airflow and KubernetesĪpache Airflow is a popular open source workflow management tool.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed